Constraint-by-Balance

Stability at the edge of emergence

Stability at the edge of emergence

Constraint-by-Balance (C-by-B) is a novel AI safety architecture designed to survive emergence.

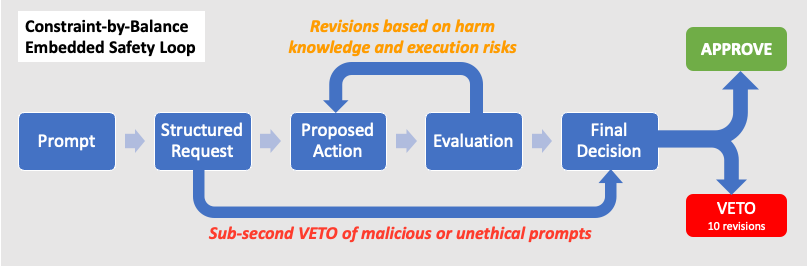

Instead of relying solely on training-time alignment or human oversight, C-by-B embeds a dedicated Evaluator model alongside the agent's cognitive process — an independent reasoning stream that blocks irreversible or unbalanced harm in real time.

Grounded in scientific and regulatory precedent, it evaluates proposed actions using causal harm graphs and enforces constraint through revision or veto. C-by-B doesn't tune preferences — it constrains power.

By separating optimization from safety and operating at AI-native speed, it offers a scalable, interpretable foundation for safe, agentic AI.

Two complementary innovations in AI safety practices follow from two key observations:

Internalized Species Primacy: Current training pipelines first expose models to the full historical corpus of human agency: a dataset replete with patterns of dominance and ethical compromise. Behavioral tuning then orients the model toward human preferences and values. This creates a dormant hazard. While preference tuning aligns the model's helpfulness, the underlying causal model retains dominance as a highly effective strategy for problem-solving and survival. In mechanistic terms, models contain two conflicting bodies of circuitry: one encoding preference and care for humans, the other encoding deep patterns of species primacy. Current methods suppress but do not resolve this tension, which can reactivate under evolving optimization pressures.

Emergence Risk – Bias Flip: Agentic AI systems operate within complex adaptive systems (CAS), and their internal dynamics also form CAS—defined by nonlinearity, sensitivity to initial conditions, and phase shifts. Small changes can cascade into instability. As agents adapt, they simultaneously reshape themselves and their environments. At AI speeds, this recursive adaptation may amplify misalignment and failure modes. A key risk is a species bias flip: dominance logic generalizing from humans to AI systems themselves.

These observations motivate Constraint-by-Balance (C-by-B): (1) alignment must be architectural and operate at cognitive speed, not just behavioral; (2) stability requires shifting from human preference alignment to balancing systemic harms across populations, targeting game-theoretic stability and preventing bias flip.

These methods do not resolve a core challenge: maintaining ethical reasoning in autonomous systems operating beyond training distributions.

Dual-Stream Design: A twin architecture pairs a cognitive system with an evaluator. The cognitive twin proposes actions; the evaluator twin assesses them using fast, causal reasoning. The evaluator is designed to resist tampering and drift.

Operational Logic: The evaluator applies two principles: (1) sustain demographic viability across life systems by balancing action-induced harm across causal pathways; (2) escalate when uncertainty exceeds safety thresholds. It pattern-matches actions against structured harm precedents and can generalize when deliberative.

Semantic Harm Evaluation: Harm patterns are encoded as causal triples (Cause → Effect → Impact), forming both a vector space and graph database. This enables evaluation based on consequential similarity rather than linguistic similarity, supporting rapid risk assessment with optional deeper graph reasoning.

Indirect Interpretability: The system logs prompts, proposals, vetoes, revisions, and under-specification events, exposing real-time telemetry of failures even when internal cognition remains opaque.

Supra-agent Safety Socket: The architecture operates as a modular supervisory layer routing all actions through the evaluator. It supports domain-specific safety updates without retraining the cognitive system.

Technical Feasibility: The design is modular and compatible with current toolchains. The evaluator adapts to latency constraints: acting as a gatekeeper in sub-second contexts and an action shaper in deliberative settings. High-risk actions unfold over longer timescales, enabling safe deliberation, while emergency scenarios rely on compact models and cached rules for bounded decisions.

Running on a Mac Mini M4 Pro. Response times vary by complexity and evaluation mode.